Here’s what you’re actually asked to do — and how your clinical experience translates into this kind of work.

What clinicians are asked to do

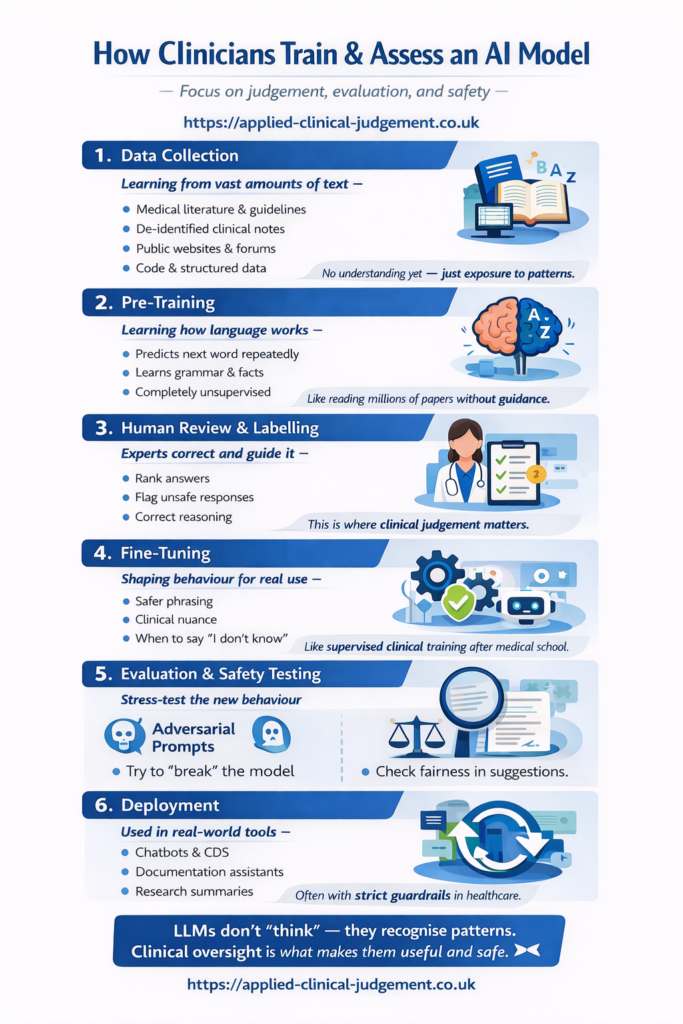

Health AI systems are built using three things: structured prompts, reference answers (sometimes called “gold standard” answers), and evaluation criteria. Clinicians are involved at each stage to ensure the system reflects real-world reasoning rather than confident-sounding but clinically problematic responses.

In practice, this means reading a clinical scenario and assessing how an AI responded; writing a reference answer that shows how a clinician would actually reason through it — including uncertainty, risk-flagging, and knowing when not to answer; scoring AI outputs against structured criteria covering safety, appropriateness, tone, and realism; and identifying responses that are plausible but clinically wrong, overconfident, or likely to mislead. The focus throughout is on reasoning quality, not speed.

Where clinical judgement is applied

Clinical judgement is applied at multiple stages of health AI training, including prompt development, reference answer creation, output evaluation, and quality assurance.

What a good reference answer looks like

When asked to define a “gold standard” response, clinicians aren’t expected to write the perfect textbook answer. They’re expected to reflect how a competent, cautious clinician actually thinks — balancing risks and benefits, acknowledging what isn’t known, safety-netting appropriately, and being explicit about when something should be escalated or referred rather than answered directly. Overconfident or overly comprehensive answers often score poorly.

Why clinicians specifically

Health AI systems learn from patterns. Without clinicians involved in training, those patterns can reward plausibility over safety — producing responses that sound right but apply poorly to real clinical scenarios. The contextual judgement clinicians bring — knowing when a situation is more complex than it appears, when a caveat matters, when a “correct” answer is still inappropriate — is precisely what data alone can’t provide.

What this work doesn’t involve

No coding or software development. No direct patient care. No identifiable patient data. No automation of your clinical judgement. This is evaluative, reflective work carried out remotely, typically task by task, on a flexible basis.

How it fits alongside clinical work

Most clinicians doing this work treat it as portfolio or supplementary income alongside NHS, private, or locum roles. Time commitment varies by platform and project, but the structure is task-based rather than shift-based — you’re not committing to set hours.